As a leading information provider for over 40 years, LexisNexis has tremendous experience solving complex queries and big data challenges. Our experience has been built managing a vast set of publicly available resources and delivering solutions to agencies that help solve unstructured data challenges and information assurance issues. We process over 77 million records per day and maintain over 2 petabytes of unique data. LexisNexis maintains the strictest privacy, security and compliance regulations and upholds data providence.

Our vast data sources include: Public Records, Global News, Watch Lists, Periodicals, Journals, Scientific Data, Business Data, and Legal Content.

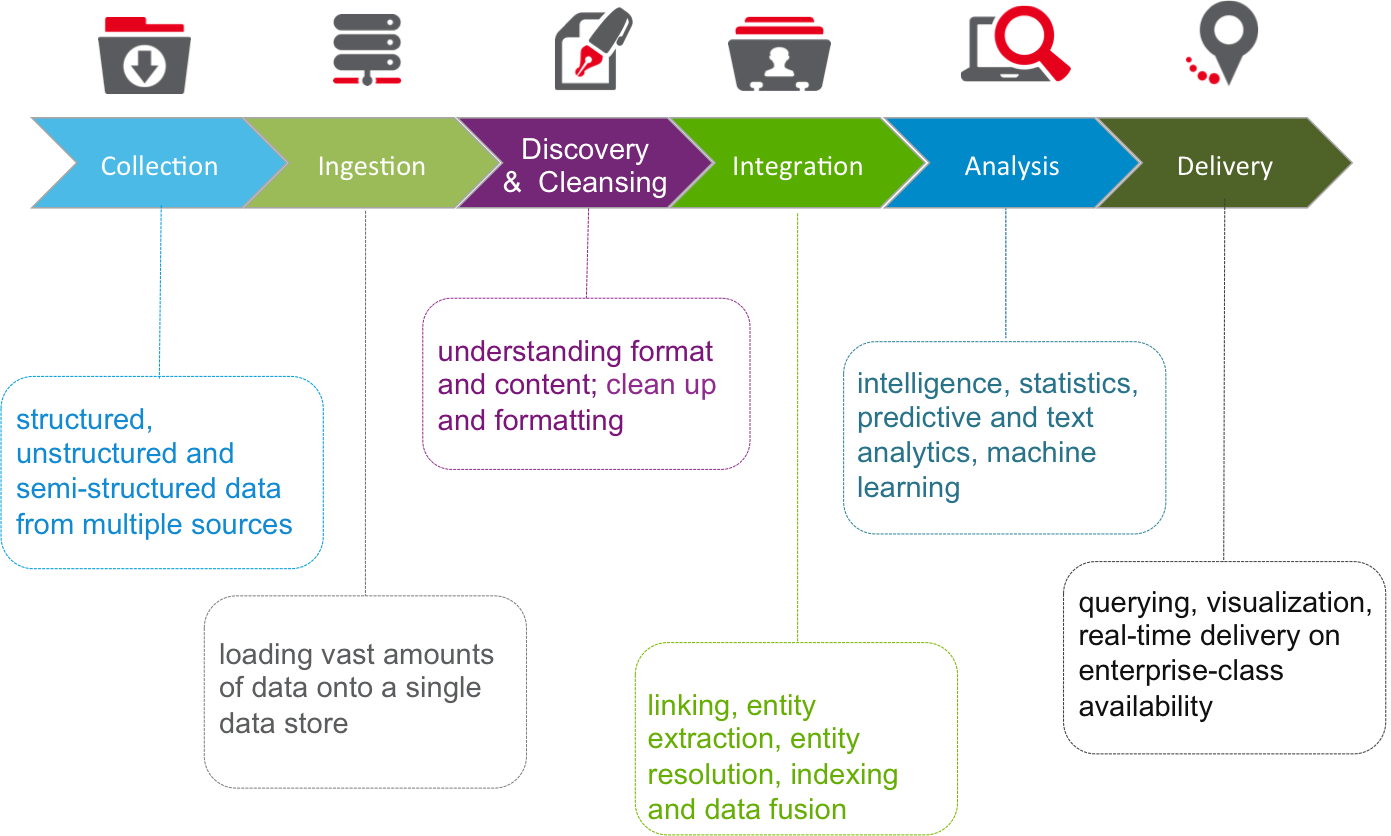

Capabilities include:

- Data hygiene

- Data fusion

- Data triage

- Data mining

- Data linking

- Exploratory data analysis

- Information assurance

- Network analysis

- SmartIndexing Technology™

- Visual data discovery

- Massively scalable

- Cloud deployable

The HPCC Difference

To manage, sort, link, and analyze billions of records within sub-seconds, LexisNexis designed a data intensive supercomputer built on our own high performing computing cluster (HPCC) platform that is proven for the past 10 years with customers who need to sort through billions of records. Customers such as the federal government, law enforcement, leading banks, insurance companies and utilities, depend on LexisNexis technology and information solutions to help them make better decisions faster. The supercomputing platform is available as an open source solution called HPCC Systems®, and is an alternative to Hadoop.

Designed to manage the most complex and data-intensive analytical problems, HPCC Systems can process, analyze and find links and associations in high volumes of complex data significantly faster and more accurately than current technology systems. HPCC Systems scales linearly from tens to thousands of nodes handling many petabytes, supporting millions of transactions per day. HPCC Systems delivers on a single platform, a single architecture and a single programming language for efficient processing.

Key Features of HPCC Systems

- Runs on Amazon cloud

- Is extendable

- Integrates with Hadoop

- Massively scalable

- Enterprise class support

Its easy programming language offers less time, less code.

The core of HPCC Systems is its Enterprise Control Language (ECL). ECL is a declarative, non-procedural data-centric programming language, highly optimized for large-scale data management and query processing. It automatically handles workload distribution across all nodes of the massively parallel HPCC clusters, enabling data analysts and data scientists to simply define the result requirements of their big data manipulation/analysis needs without worrying about implementation.

For more information on HPCC and ECL, visit http://hpccsystems.com